Coded Bias

Director: Shalini Kantayya

Writer: Shalini Kantayya

Part of: this human world Film Festival

Seen on: 13.12.2020

Content Note: (critical treatment of) racism

“Plot”:

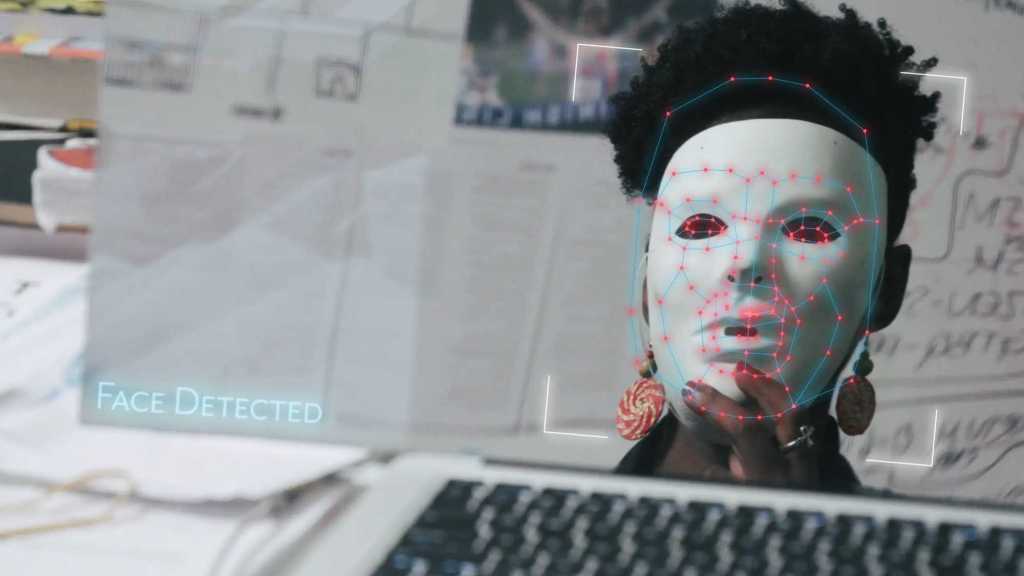

When MIT researcher Joy Buolamwini started working with facial recognition software for a class, she didn’t expect to discover that the AI is absolutely biased. She dug into the matter, uncovering more and more problems. Meanwhile facial recognition is used more all the time, for surveillance and police work, regardless of the problems that still aren’t solved.

Coded Bias takes on a very timely topic, considering the racist and also sexist bias in apparently neutral software and algorithms and its implications for police work, amont other things. It’s interesting, well-argued and so well-structured that time flies by and yet you never feel overwhelmed by the topic. It was the perfect choice of final film for the this human world Film Festival.

I have done a bit of (casual) reading on this topic, so I wasn’t entirely new to the concept of biased algorithms and how we bake human biases into software. But it was interesting to see it all laid out so neatly and consequently as in this documentary.

Especially because I wasn’t aware where the criticism actually came from – and if we look at this documentary, it was mostly female researchers and a lot of them Black or women of color who did the work of showing how problematic software can be. One almost has to say: of course. White men don’t notice those things and tech being such a white man area is exactly where a lot of the problems come from.

But the also broadens the scope beyond the technical side, considering more general problems with surveillance as well. And it’s not just a problem because automatized surveillance is racist – there’s more to it than that.

Coded Bias also looks at the UK and at China, not just the USA, to consider how things are dealt with there, while managing to never make it seem like somebody else’s problem – because somewhere else it may appear worse. Instead the film makes clear that it is a global problem – the differences are just minor differences in degrees of awfulness. Thus the film urges us to approach technology with caution and criticism. Just because it’s automated doesn’t make it better.

Summarizing: excellent.